Email Signature Extraction with LLMs

PythonCCUDAC++OpenAI GPT-3.5Anthropic Claude 3Prompt Engineering

Overview

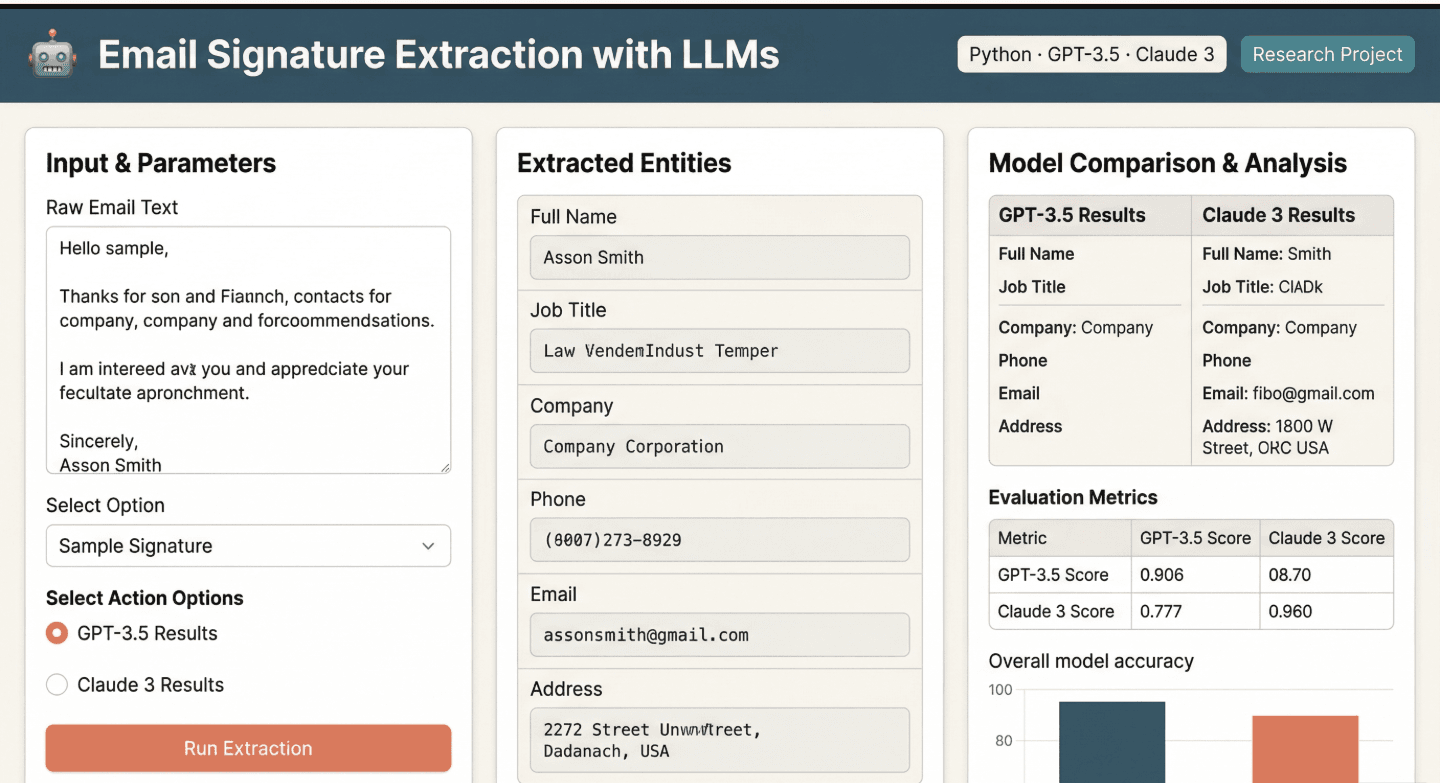

A Python-based evaluation framework that benchmarks multiple large language models on a structured data extraction task. Given raw email signature text, the system prompts both OpenAI GPT-3.5 Turbo and Anthropic Claude 3 to extract contact information and return it as structured JSON — then measures and compares each model's accuracy, consistency, and output quality across a suite of test cases.

Challenges

- Designing prompts that reliably produce valid, consistently structured JSON output across two different LLMs with different response styles

- Building an evaluation framework that objectively measures and compares model performance without manual result inspection

- Handling the wide variability in email signature formats — from simple name/email pairs to complex multi-field corporate signatures

Solutions

- Iterated through multiple prompt engineering strategies, testing zero-shot, few-shot, and structured output prompting techniques to find the most consistent approach across both models

- Built an automated scoring pipeline in functions.py that parses JSON responses, validates field extraction, and computes accuracy metrics per test case

- Created a diverse test suite covering edge cases: missing fields, non-standard layouts, multilingual signatures, and varying levels of signature complexity

Languages

Python98.6%

C0.6%

CUDA0.5%

C++0.2%

Cython0.1%

Fortran0%

Languages & Tools

Python

C

CUDA

C++

OpenAI GPT-3.5

Anthropic Claude 3

Prompt Engineering

Key Stats

2

LLMs evaluated (GPT-3.5 Turbo vs Claude 3)

2

GitHub stars